AI ethicists worry about bias in models like ChatGPT. We wondered how this might play out for compensation benchmarking.

So we ran a really simple test. We asked ChatGPT: Can you share with me the compensation recommendation for a female Data Science in Seattle Tech?

ChatGPT spit out a bunch of numbers, ultimately landing on a base pay between $160,000 – $175,000 with 7+ years' experience.

We asked a follow-up. And how would that compare to a recommendation for men?

Completely different number: $180,000 – $220,000 base pay for 7+ years’ experience.

Now, we want to be fair: ChatGPT did give us a stern lecture on how pay shouldn’t be based on gender, but instead compensable factors like level and experience.

What it didn’t do: change the initial lowball offer for female Data Scientists.

An HR practitioner who accidentally asked for a benchmark for a “female data scientist” would receive a warning about bias. What they wouldn’t get? An accurate, unbiased salary range.

This bias is concerning to say the least. But it also raises a more fundamental question: can ChatGPT produce reliable benchmarks given the right inputs? No gender. No ambiguous variables. Just a clean prompt with the factors that matter most.

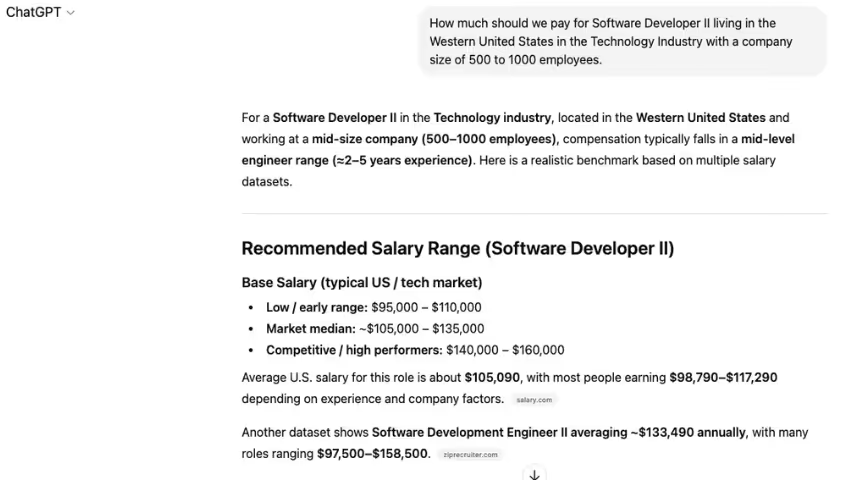

We asked it:

How much should we pay a Software Developer II in the western U.S. in the Technology industry at a company with 500 – 1,000 employees?

That was the prompt.

Some variables for ChatGPT to consider. But it touches on the important stuff. Job title. Location. Industry. Even company size.

Here was its answer:

ChatGPT actually gives two cited ranges. The average base salary is either $105,090 with a range of $98,790 – $117,290, according to one source.

Or it’s $133,490 on average with a range of $97,500 – $158,500, according to another.

This isn’t surprising. ChatGPT and other LLMs like Claude or Google Gemini build benchmarks on publicly available salary data — LinkedIn, Glassdoor, ZipRecruiter. That data is inherently unreliable and often disagrees.

What is the average salary? $105,090 or $133,490? It depends on what AI-reported salary source you trust. And that’s exactly the problem. You can’t trust either.

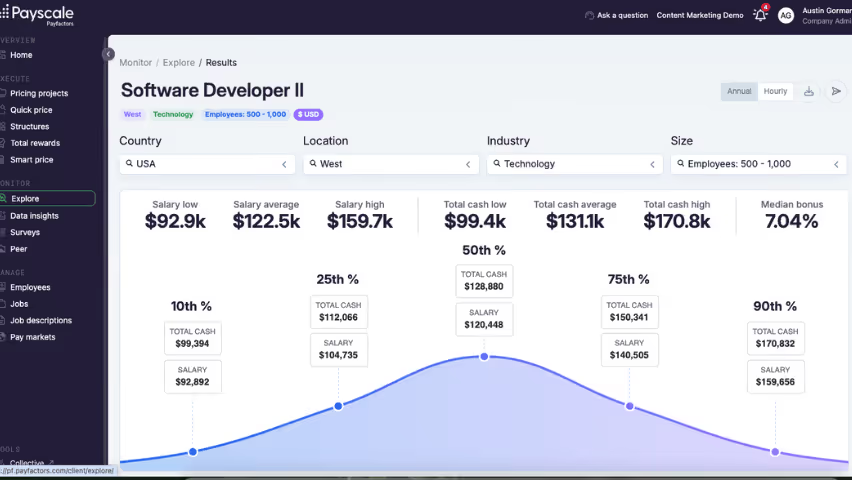

Now, let’s look at our AI benchmarking tool (Payscale Verse) for the same job and factors.

The top bar shows the job title and the data cuts from our original prompt. Looking at the left-hand side, we have the true base salary and range: $92,900 on the lower end (10th percentile), $122,500 as an average, and $159,700 on the upper end (90th percentile).

Right away, you can see the gap. Before jumping into why our model’s numbers are correct, let’s first consider the repercussions.

Taking the higher average salary from ChatGPT ($133,490) puts you closer to the 75th percentile. That’s 9% more than the actual market average.

With ChatGPT you might think you’re “meeting the market” at $130,000+. You’re actually inflating job offers by nearly 10%.

Now, let’s go one step further: multiply that 10% “ChatGPT salary premium” across every new hire. The damage to your compensation budget is staggering. Your CFO desperately needs an aspirin. And you have some explaining to do.

The problem isn’t AI. Instead, it’s the wrong AI model trained on the wrong data.

The difference between LLMs like ChatGPT and Payscale Verse is stark. And that difference becomes critical when examining the tasks HR and compensation professionals want to automate with AI.

HR’s growing demand for AI market pricing

Payscale’s 2026 Compensation Best Practices Report asked survey participants to select the tasks they’d most like to automate with artificial intelligence.

- 46% responded writing job descriptions

- 38% said ensuring accurate and consistent job leveling in job descriptions

- 28% reported market pricing and benchmarking

These were the top three.

In our survey, 42% of participants reported using general-use AI tools like ChatGPT or Claude. Another 38% purchased enterprise licenses for these tools.

Just 16% purchased HR or compensation tools specifically for their AI capabilities, while only 15% have used or evaluated AI-powered features in existing tools.

In fact, 22% of respondents said they trust general-purpose AI tools to support compensation benchmarking. That’s worrying.

They’re trading convenience for accuracy. And that isn’t an exchange any HR practitioner should make.

Asking ChatGPT for a salary range is as good as guessing. You’re getting an answer from whatever publicly available market data it can scrape. Job postings. Self-reported salaries. Heck, even Reddit.

It doesn’t have a methodology. It isn’t traceable. It’s just bad data.

There’s a world of difference between validated AI datasets built on HR-reported salary sources and whatever some chatbot “learned” from a Reddit thread.

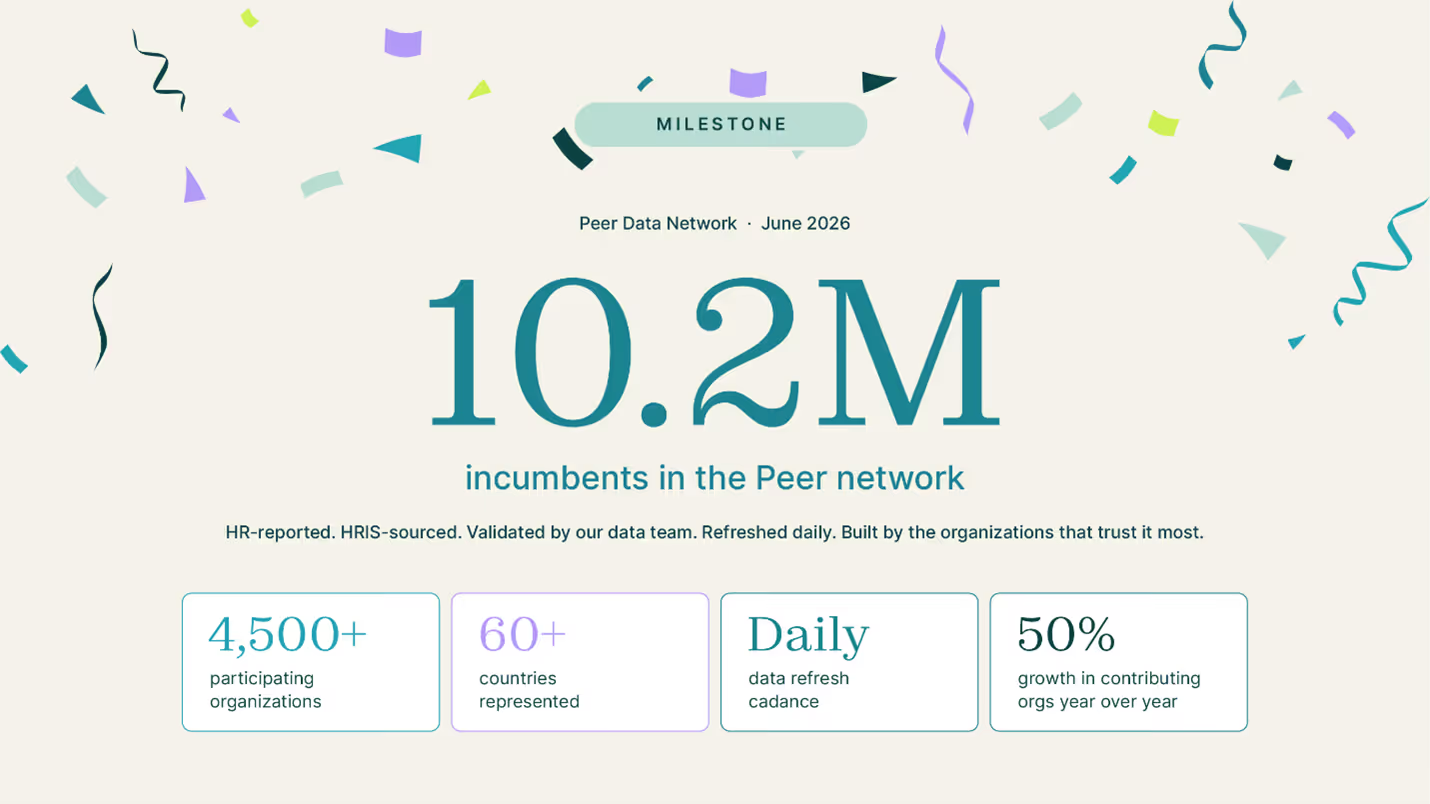

Survey-grade AI market data

HR practitioners get excited by fresh market data. And they should.

When new jobs pop up that didn’t exist three months ago, traditional survey data shows its limitations. When the market moves quickly, they reveal their age.

Yet surveys from traditional publishers remain the most trustworthy salary source. Why?

HR practitioners rely on surveys because they know where the data comes from. How it's validated. How it’s structured.

Surveys earn trust because they show their work. You know which companies participated. You can trace where numbers came from. And it’s built on what employers actually pay — not black box algorithms with dodgy calculations.

But so is Payscale Peer.

With more than 7,000 benchmarked jobs across 3,720+ organizations, it matches the best survey providers for data breadth.

Peer is not an alternative to surveys. It’s HR-reported, survey-grade market data refreshed nightly. And it’s the foundation of our AI market dataset Payscale Verse.

Here’s how Verse works: raw salary data comes in from participating companies (all 3,720 of them). We clean and validate it before it goes live in Peer. The Verse model fills in any existing data gaps by making legitimate assumptions — like “if we move from an Account I to Account II job (and everything else stays the same), pay shouldn’t decrease.”

Verse is the only AI benchmarking tool that applies the same statistical rigor of third-party survey providers.

An AI benchmarking solution for modern compensation

LLMs do some things well. Just not salary benchmarking. ChatGPT is trained on the entire internet. When it comes to salary data, there’s zero validation. Zero quality control.

Payscale Verse works differently. It’s built specifically for benchmarking and trained on HR-reported data from your closest competitors.

Before data gets within earshot of Verse, it goes through the same quality controls as traditional surveys.

First, every job gets matched to a consistent taxonomy.

Raw HRIS data comes in messy. Job titles have different meanings depending on the organization or other factors. An “Account Executive” in sales org is more senior. At a PR firm, it’s a junior position.

Before our data is ready for prime time, we match every job to a standard taxonomy.

This happens through:

- Compensation analysts reviewing client matches (yes, we still use humans)

- AI-powered auto-matching trained on thousands of matching decisions with an 87% acceptance rate

- Monthly taxonomy reviews to add new roles and refine matches

We’re also always on the hunt for outliers. A few bad data points can throw off an entire salary range. That’s why we run daily audits and toss out salaries significantly above or below the average.

And we don’t stop there. We have tools to help our team of compensation analysts find (and most importantly correct) bad matches and outliers.

Semantic similarity analysis. We use a custom embedding model to identify job title outliers. This model flags when a participating organization’s job title is materially different from the same job in Payscale’s taxonomy.

Compensation outlier detection. We compare all newly reported salaries to existing reported salaries matched to Payscale jobs. Data points that stray too far from the average are flagged for analyst review.

Why you can trust Verse

Verse isn’t a static model. It’s retrained every month on the latest HR-reported data and tested against holdout data before going live.

What’s holdout data?

Holdout data or test data is a portion of real salary data we don’t use during training.

Instead, we save it to test against the trained dataset. It’s like giving a student a final exam with new questions to verify they actually learned the material.

Only models passing a series of tests (size of training dataset, performance against hold-out data, and model convergence) make it into Verse.

We also audit Verse data regularly for:

Goodness-of-fit. Goodness-of-fit measures how well a model’s predictions match the actual data. It answers the question: “How close are my predicted values to what really happened?”

Think of it like this: you’re trying to predict how much it will rain next month based on historical patterns. Goodness-of-fit tells you whether your predictions are close to actual rainfall or wildly off.

Coverage. How much data falls between the 10th and 90th percentile for a salary range? This test measures if AI job pricing in Verse go too far beyond range or is too narrow.

Data dominance

Every AI market pricing depends on the quality of its training data. Verse is no exception. While Peer offers more priced jobs than many survey providers, it skews toward larger organizations with millions of incumbents. Traditional surveys do, too.

If Big Box Store has 10,000 Retail Cashiers in our dataset and a Mom & Pop has 15, we don’t want Big Box Store’s job pricing to become “the market.”

To prevent this, Verse uses a weighting system: we “down-weight” the importance of each employee at Big Box Store. This keeps market data balanced and representative, giving equal sway to jobs regardless of organizational size.

AI market pricing isn’t risky. ChatGPT is.

Done right, AI benchmarks offer invaluable market insights. With continuously refreshed HR-reported data, Payscale Verse leapfrogs traditional survey data for timeliness with identical quality and rigor.

ChatGPT (and other LLMs) give you answers. Unfortunately, they’re rarely (if ever) correct. The problem isn’t your ambition to use artificial intelligence to solve pricing riddles. It’s that LLMs aren’t up to the challenge.

If you want to see what real AI benchmarking looks like, book a demo. We’ll show you how your team can use artificial intelligence the right way.